Skills Up Down and Across

There has been a lot of debate recently on how to gather skills data.

At many companies these are part of annual (or quarterly) performance reviews. One large Vancouver engineering firm requires employees to update skill information as part of their annual review in order to finish this task and get their bonus. At least this company let’s people define their own skills. Some of the more regimented companies we follow, a large player in the Vancouver apparel industry requires its people to choose the skills appropriate to the role they are in.

In a recent article in Harvard Business Review, “The Unsexy Fundamentals of Great HR” Marc Effron and Miriam Ort list five frequently made claims by HR professionals. One of these is “3. Self-assessments of performance are more accurate than peer assessments but less accurate than a manager’s assessment.” They identify this as one of the claims that is false. At TeamFit we believe that peer assessments, properly analyzed, are more accurate than either self-assessments or manager assessments.

Why do we believe this? There are reasons to be suspicious of peer assessments.

There is peer pressure in many teams to award high scores

Some teams show ‘grieving’ behavior in which one person is made a scapegoat and down-graded or competitive cliques appear

Peers only have their own experience to judge from and they may not be aware of best practices (sometimes referred to as ‘leveling down’)

These are all legitimate concerns. The first two are relatively easy to deal with. Our underlying machine learning system (a Bayesian network for those who care about geeky details) is being built to identify these behaviors and take them into account.

The ‘leveling down’ issue will be harder to address. We are taking two approaches to this. One is to link our analytics to project outcomes and then look for the patterns of skills and people that correlate with the best outcomes. It will take a while for us to gather the data we need for this but we are getting started with this approach in the fall.

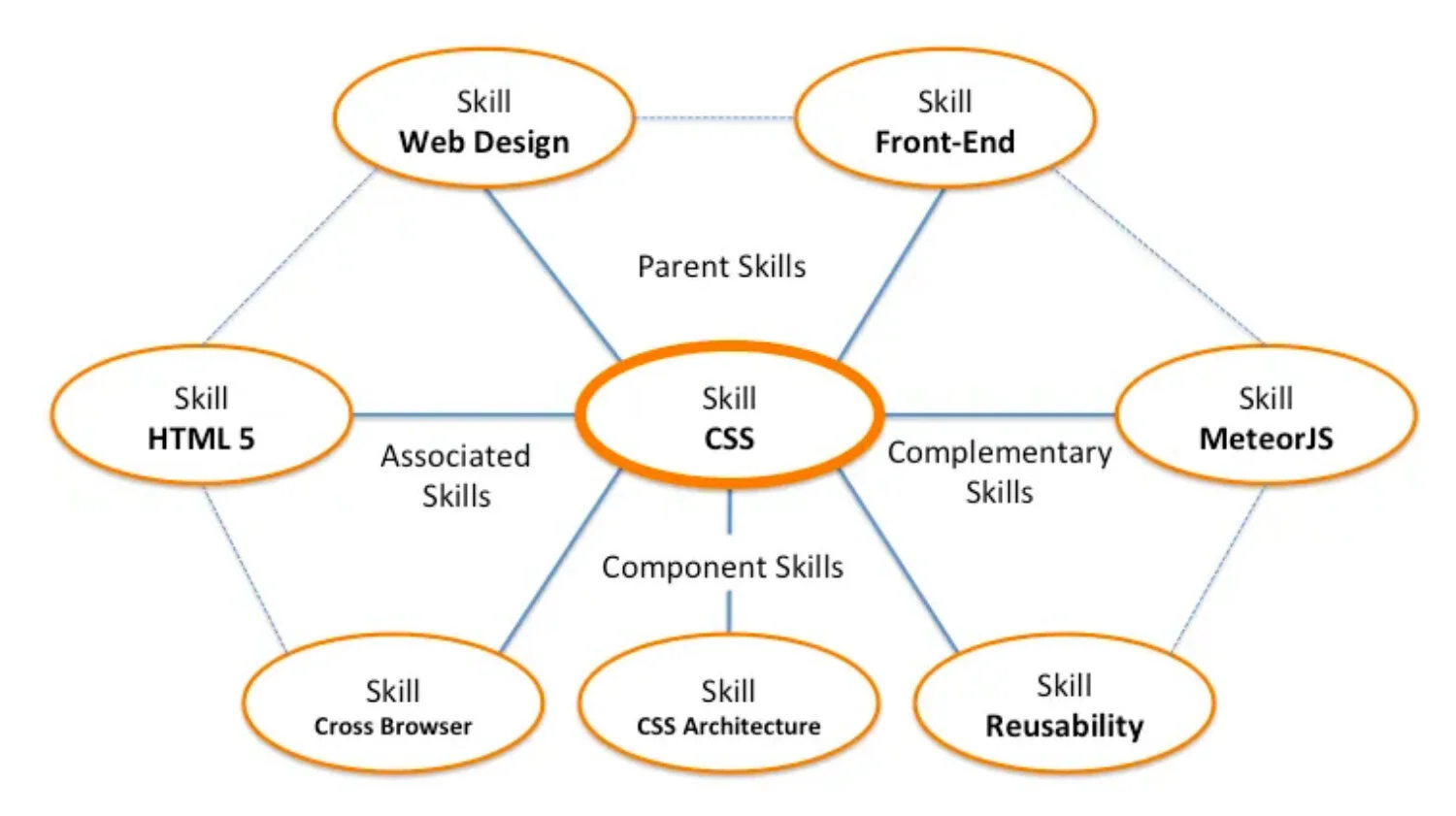

Our second approach is to look at the skill graph and not just any single skill in calculating SkillRank™. What is a ‘skill graph’? It is the pattern of connections between skills. In the TeamFit graph the skills are the nodes and the relations between skills are the edges (the graph can also be extended to include the relationship between skills and people). Our hypothesis is that we can identify patterns in the skill graph associated with expertise.

Associated skills are those that we expect to find together. So that if a person claims one we expect them to have the other and we expect some correlation between the SkillRank for each skill. Complementary skills are different. We have no expectation that a person will have two complementary skills, though you will probably want to have complementary skills together on a team.

Where do all these skills come from? One approach is to have a formal competency library for the organization. There are a number of such libraries available, see this list on Deloitte Bersin, and there are consulting firms that will build such a library for you. We think this is a bad approach. Why?

The library will not be current, business is moving so quickly today that any formal library rapidly becomes obsolete and incomplete

The terms will not capture the actual language used in the field

The skill graph (if indeed the library has one) will be rigid (every individual, team and organization has a unique skill graph that is part of its identity and differentiation)

The counter argument is that a top down library is needed in order to standardize terminology and make comparisons possible. This is basically the argument that erupted between taxonomies and folksonomies ten years ago. And the solution is the same. The open system has to include a variety of support for mapping terms, reducing duplication and clarifying meaning. Actually the skill graph itself is the best way to clarify meaning. If we want to understand what is involved in applying a skill we should look at how it is actually used in projects, what other skills it is used with, and at the component skills that contribute to the skill.

Top-down competency libraries kill innovation, prevent real communication (which has to be two way) and lock organizations into fossilized views of their skills.

TeamFit gives a bottom up approach to skills, one in which skills are defined and measured in the context of actual project work. Where the skills really matter.

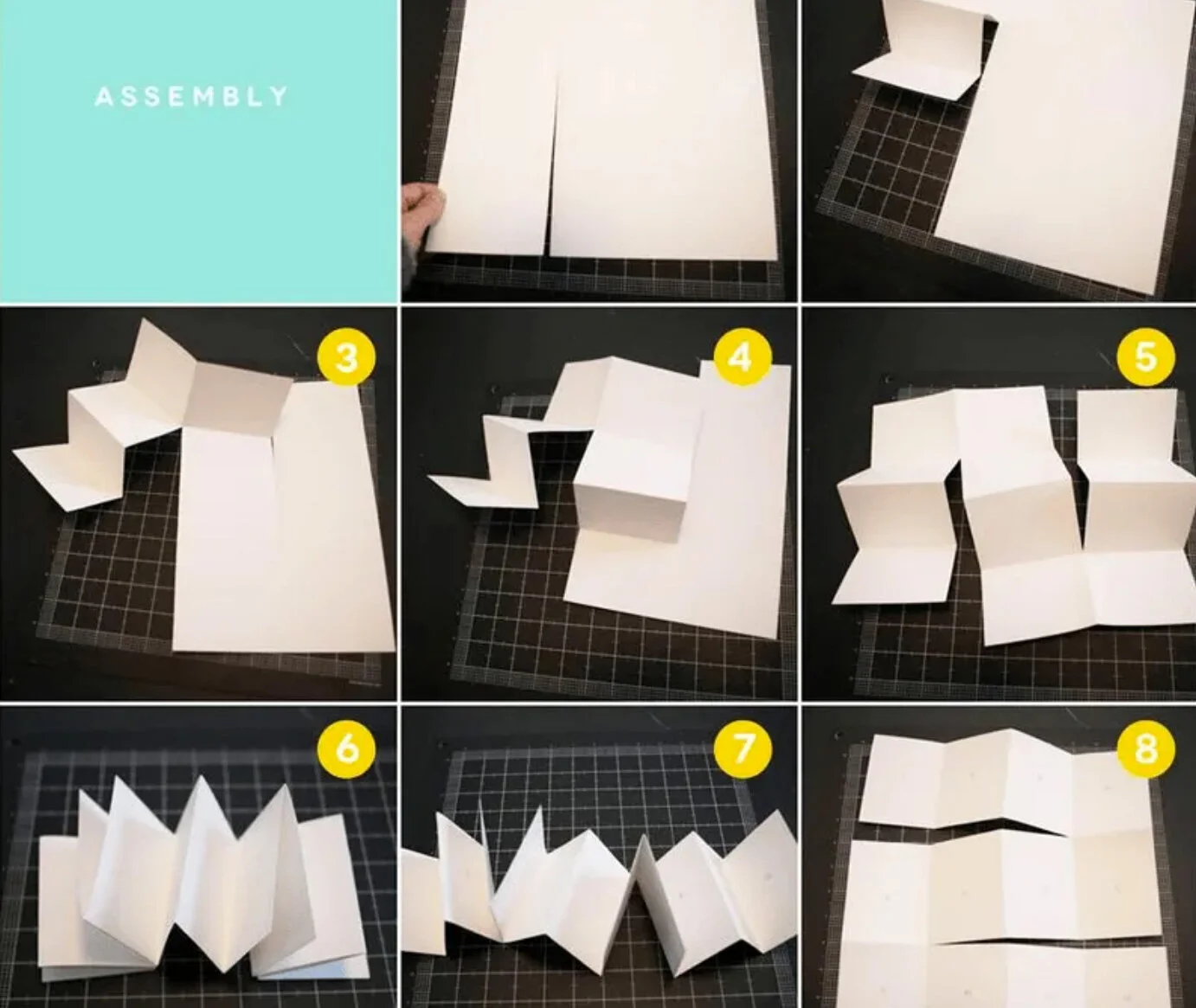

Image from October Afternoon, a lovely little tutorial on how to make an accordion book (a skill I would like to learn).